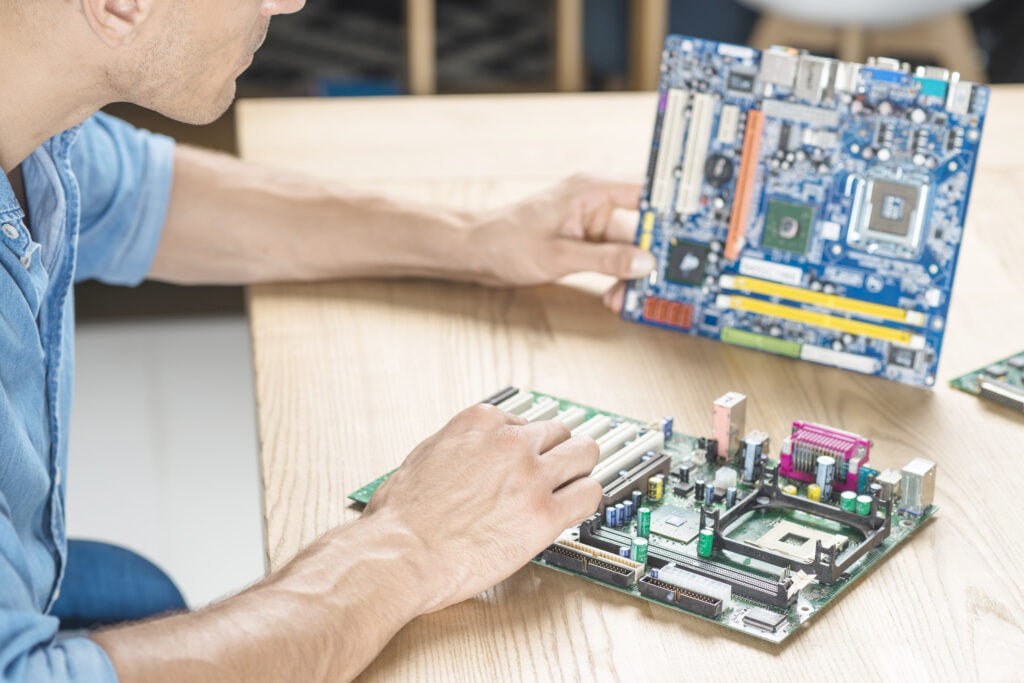

The demand for real-time data processing and low-latency applications is increasing dramatically in today’s quickly expanding technological landscape. The edge computing paradigm, which is a distributed computing model where data is processed at the network’s edge rather than in centralized data centers, satisfies this desire. Edge computing enables quicker response times, lower latency, and improved privacy and security by bringing processing power and intelligence closer to where data is generated.

Effective data processing at these distributed locations is essential to the success of edge computing. FPGAs, also known as Field-Programmable Gate Arrays, are useful in this situation. Reconfigurable hardware components called FPGAs have become popular as accelerators for a variety of edge-computing workloads.

The article delves into the core ideas, application cases, programming paradigms, and comparative benefits of FPGAs over conventional processors like CPUs and GPUs as it examines the junction of FPGAs and edge computing. We will also look at real-world case studies, difficulties, and new patterns that are influencing how this potent technological combination will develop in the future.

FPGA in Edge Computing: Key Concepts

Field-Programmable Gate Arrays (FPGAs) have become pivotal components in the landscape of edge computing due to their unique characteristics and capabilities. In this section, we will delve into the key concepts that define the role of FPGAs in edge computing.

A. FPGA’s Role in Edge Devices

Due to their ability to provide hardware acceleration, FPGAs are essential in edge devices. FPGAs can be configured to carry out particular operations in parallel, as opposed to general-purpose processors, which carry out instructions in a sequential fashion. FPGAs are the best choice for real-time edge applications because of their ability to process data at significantly higher speeds because of parallelism.

IoT sensors, cameras, and autonomous vehicles are just a few examples of edge devices that produce a ton of data. It is not necessary to send massive amounts of raw data to centralized data centers because FPGAs can preprocess this data locally. This lowers latency while also saving money on bandwidth and cloud computing fees.

B. Accelerating Edge Workloads with FPGAs

With limited resources and processing power, edge computing frequently uses such devices. Performance can be increased by using FPGAs to offload computationally demanding tasks from the CPU or GPU. Applications like picture and video processing, inference in machine learning, and encryption benefit greatly from this acceleration.

Through the use of unique hardware designs, FPGAs can be customized to cope with certain edge workloads, producing extremely efficient and optimal solutions. Achieving a balance between performance and power economy is possible because to this level of flexibility, which enables developers to precisely adjust FPGA-based systems for their needs.

C. Integration of FPGAs in Edge Computing Systems

Hardware compatibility, software support, and power considerations are just a few of the numerous issues that must be carefully taken into account when integrating FPGAs into edge computing systems. Following are some crucial facets of FPGA integration.:

Hardware Compatibility: The architecture and interface of the edge device must be compatible with FPGAs. This frequently entails choosing FPGA modules that can be seamlessly integrated or building specialized FPGA boards.

Software Stack: It is crucial to create software that can communicate with and program FPGAs. High-level synthesis (HLS) tools are used for this, which entails converting code into FPGA configurations.

Power Management: Devices on the edge sometimes rely on batteries or have strict power budgets. Effective power management is essential to prevent FPGAs from excessively depleting the device’s battery.

Scalability: As edge computing environments grow, the scalability of FPGA-based solutions becomes crucial. Designing systems that can easily scale to accommodate increasing workloads is essential for long-term success.

Use Cases of FPGAs in Edge Computing

Field-Programmable Gate Arrays (FPGAs) have found a myriad of applications in edge computing due to their ability to accelerate specific workloads efficiently and with low latency. Let’s explore some prominent use cases where FPGAs are making a significant impact in the edge computing landscape:

A. Real-time Video Analytics at the Edge

Surveillance Systems: By enabling real-time video analytics such as object recognition, facial recognition, and license plate recognition directly at the camera, FPGAs improve the capabilities of security cameras and eliminate the need to send massive video streams to centralized servers.

Retail and Marketing: For better decision-making and more individualized marketing, retail establishments utilize FPGAs to evaluate in-store video feeds for customer behavior, foot traffic patterns, and inventory management.

Automotive: In autonomous vehicles, FPGAs process sensor data from cameras and LiDAR systems to detect and respond to objects and road conditions in real time, contributing to safer driving experiences.

B. IoT Sensor Data Processing

Smart Agriculture: FPGAs are used in precision agriculture to track crop health, weather patterns, and soil characteristics. They analyze sensor data to optimize the use of fertilizer and irrigation.

Industrial IoT (IIoT): FPGAs are used in industrial settings to gather and process data from sensors on factory floors, enabling real-time equipment performance monitoring, preventative maintenance, and quality control.

Healthcare Wearables: FPGA-equipped wearables can locally assess vital signs and health data, enabling the early identification of health issues and obviating the need for constant data transmission to the cloud.

C. Autonomous Vehicles and Edge AI

Self-Driving Cars: FPGAs enable rapid decision-making in autonomous vehicles by processing data from sensors such as cameras, LiDAR, and radar, helping the vehicle navigate complex environments in real time.

Drones: Unmanned aerial vehicles (UAVs) use FPGAs to process data from onboard cameras and sensors, enabling autonomous flight, obstacle avoidance, and object recognition.

Robotics: Edge AI powered by FPGAs plays a crucial role in robotics applications, including manufacturing robots, delivery drones, and healthcare robots, enhancing their perception and decision-making capabilities.

D. Industrial Automation and Robotics

Factory Automation: FPGAs are integral to industrial robots, ensuring precise and real-time control of movements and processes in manufacturing environments.

Logistics and Warehousing: In logistics and warehouse management, FPGAs optimize inventory tracking, order fulfillment, and automated material handling systems.

Energy Sector: FPGAs are employed in the energy sector for monitoring and controlling power grids, optimizing energy distribution, and improving grid resilience.

E. Healthcare and Telemedicine Applications

Medical Imaging: FPGAs enhance medical imaging devices such as MRI and CT scanners by accelerating image processing, reducing scan times, and improving diagnostic accuracy.

Telemedicine: In remote patient monitoring, FPGAs enable the processing of data from various medical sensors, ensuring timely alerts and efficient use of healthcare resources.

Genomic Analysis: FPGAs contribute to the acceleration of genomic data analysis, aiding in the understanding of genetic diseases and personalized medicine.

FPGA Programming for Edge Computing

For FPGAs to effectively accelerate particular workloads, edge computing programming is a crucial component. Since FPGAs are incredibly configurable, unlike conventional CPUs, developers can design hardware accelerators that are specifically suited to the demands of edge applications. The fundamentals of FPGA programming for edge computing will be covered in this part.

A. Designing FPGA Accelerators for Edge Workloads

Designing FPGA accelerators involves creating hardware configurations that perform specific tasks faster and more efficiently than software running on a CPU or GPU. Here are key considerations:

Workload Analysis: Analyze the individual workload or algorithm that you want to accelerate first. Determine operations that are resource-intensive and bottlenecks that could be accelerated using FPGA.

Parallelism: By dividing the workload into smaller jobs that can be executed concurrently, you can take advantage of FPGA parallelism. For edge applications needing real-time answers, FPGAs excel at performing parallel processes.

Data Flow: Understand the data flow of your application. Design FPGA accelerators to minimize data movement, as data transfer can consume significant energy and time. Co-locating data and computation on the FPGA can enhance efficiency.

Algorithm Optimization: Tailor your algorithms for FPGA implementation. Techniques such as loop unrolling, pipelining, and custom data structures can be employed to maximize FPGA performance.

Memory Hierarchy: Optimize memory usage, as memory access times can be a limiting factor. FPGAs have on-chip memory resources that can be utilized effectively to reduce latency.

B. Programming Languages and Tools for FPGAs

Verilog and VHDL are two examples of hardware description languages (HDLs) that are generally needed to program FPGAs. However, with the emergence of high-level synthesis (HLS) tools and programming languages, current FPGA development has become more approachable. Following are some programming choices:

HDLs: For low-level control and customization, Verilog and VHDL are still widely used in FPGA programming.

High-Level Synthesis (HLS): HLS tools like Vivado HLS, Intel HLS, and Xilinx SDSoC allow developers to write algorithms in high-level languages such as C, C++, or OpenCL, which are then automatically converted into FPGA configurations.

OpenCL: OpenCL is a framework that enables heterogeneous computing, including FPGA acceleration. It offers a standard programming model for FPGAs, GPUs, and CPUs.

Frameworks: Some frameworks, such as Intel’s One API and Xilinx’s Vitis, provide a unified development environment for FPGA and other accelerator programming.

C. Challenges in FPGA Programming for Edge

While FPGA programming offers significant advantages, it also presents challenges:

Steep Learning Curve: FPGA programming can be complex, especially for those new to hardware development. Learning the intricacies of FPGA architecture and toolchains can take time.

Resource Constraints: FPGAs have limited resources, including logic cells, memory, and DSP blocks. Efficiently utilizing these resources can be challenging.

Debugging and Testing: Debugging FPGA designs can be more challenging compared to software debugging. Real-time debugging tools and simulation environments are essential.

Maintenance: As workloads evolve, FPGA accelerators may require reprogramming or reconfiguration, which can be cumbersome.

FPGA vs. GPU and CPU in Edge Computing

The selection of hardware accelerators is key for maximizing performance, power efficiency, and cost-effectiveness as edge computing becomes more and more important for applications like autonomous vehicles, IoT devices, and real-time analytics. In this part, we will contrast traditional central processing units (CPUs) and graphics processing units (GPUs) with field-programmable gate arrays (FPGAs) in the context of edge computing.

A. Comparing FPGA Performance to CPUs and GPUs

Parallel Processing:

- CPUs: CPUs are designed for general-purpose computing, making them versatile but often less efficient for highly parallel workloads.

- GPUs: GPUs excel in parallel processing, making them suitable for tasks like graphics rendering and deep learning.

- FPGAs: FPGAs can be configured to perform specific parallel tasks efficiently, providing performance gains for tailored workloads.

Latency:

- CPUs: CPUs offer low latency but may struggle with highly parallel, computationally intensive tasks.

- GPUs: GPUs have lower latency compared to CPUs for certain parallel workloads.

- FPGAs: FPGAs can achieve ultra-low latency by customizing hardware logic for specific tasks, ideal for real-time edge applications.

Energy Efficiency:

- CPUs: CPUs are power-hungry and may not be suitable for battery-powered edge devices.

- GPUs: GPUs provide excellent performance per watt but might still be power-hungry for some edge use cases.

- FPGAs: FPGAs are highly power-efficient because they consume energy only for the specific logic they execute, making them ideal for edge devices with stringent power constraints.

B. Scalability and Power Efficiency Considerations

Scalability:

- CPUs: CPUs can scale vertically, adding more processing power with faster processors.

- GPUs: GPUs offer horizontal scalability by adding more GPU cards, suitable for certain parallel workloads.

- FPGAs: FPGAs provide the ability to scale both vertically (by upgrading FPGA cards) and horizontally (by adding more FPGA devices), allowing flexibility in accommodating diverse edge workloads.

Power Efficiency:

- CPUs: CPUs consume more power as clock frequencies increase, impacting power efficiency.

- GPUs: GPUs are power-efficient for parallel tasks but may not be as adaptable as FPGAs for optimizing power consumption.

- FPGAs: FPGAs excel in power efficiency, with the ability to tailor power consumption to the specific workload, which is critical for battery-operated edge devices.

C. Hybrid Approaches Combining FPGA, CPU, and GPU

Heterogeneous Computing:

- Combining CPUs, GPUs, and FPGAs in a single system enables a heterogeneous computing approach.

- Heterogeneous systems can leverage the strengths of each component for different parts of an edge workload, optimizing both performance and energy efficiency.

Example Applications:

- Autonomous Vehicles: FPGAs for real-time sensor data processing, GPUs for deep learning, and CPUs for control tasks.

- IoT Gateways: FPGAs for preprocessing sensor data, CPUs for management, and GPUs for analytics.

Conclusion

In summary, the addition of Field-Programmable Gate Arrays (FPGAs) to edge computing represents a quantum jump in the power of distributed processing. FPGAs are essential in many edge applications due to their real-time customization, power efficiency, and scalability. Although there are obstacles, ongoing developments, and coordinated efforts promise to establish FPGAs as a cornerstone technology, advancing edge computing into a period of unheard-of efficiency and responsiveness. As we move forward, FPGAs and edge computing continue to form a future characterized by edge systems and devices that are smarter, quicker, and more capable.

![What is FPGA Introduction to FPGA Basics [2023] computer-chip-dark-background-with-word-intel-it](https://fpgainsights.com/wp-content/uploads/2023/06/computer-chip-dark-background-with-word-intel-it-300x171.jpg)