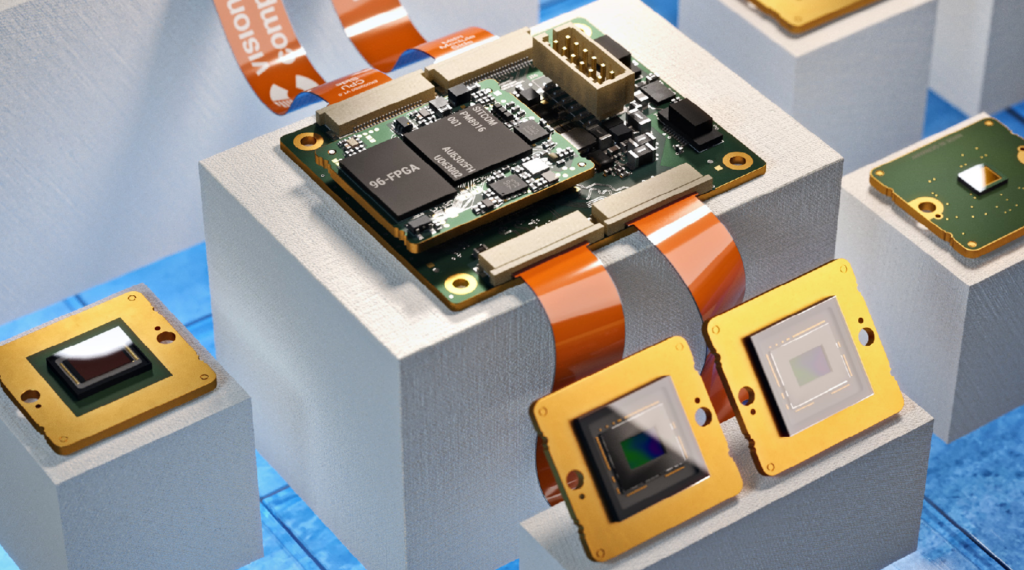

FPGA-based acceleration offers a flexible and high-performance solution for computational tasks. Field-Programmable Gate Arrays (FPGAs) can be configured and reconfigured to match specific workloads, providing advantages over traditional CPUs and GPUs. FPGA-based acceleration enables faster processing, lower power consumption, and customizable hardware configurations.

Applications span machine learning, data processing, and telecommunications. While challenges exist in design complexity and integration, FPGA-based acceleration has showcased real-world success and holds promising future potential.

This blog series explores FPGA-based acceleration’s benefits, applications, design considerations, and emerging trends. Discover the power of FPGA-based acceleration in driving computational performance.

Understanding FPGA-based Acceleration

What is FPGA-based acceleration?

FPGA-based acceleration is the use of Field-Programmable Gate Arrays to offload and speed up the execution of computationally heavy operations from conventional CPUs. Contrary to CPUs and GPUs, FPGAs are much more flexible and may be reprogrammed to construct unique hardware designs, allowing for effective parallel processing and customized algorithm optimizations.

Advantages of FPGA-based acceleration:

Higher performance and throughput: FPGAs offer parallel processing capabilities, enabling them to handle massive amounts of data in parallel and deliver significantly higher performance compared to CPUs and GPUs.

Lower power consumption and energy efficiency: FPGAs are known for their energy-efficient design, allowing for substantial power savings while achieving comparable or superior performance compared to other acceleration methods.

Customizability and reconfigurability: FPGAs can be customized and reprogrammed to implement specific hardware designs, making them adaptable to different algorithms and applications. This flexibility enables fine-tuning for maximum performance.

Reduced latency and improved real-time processing: FPGA-based acceleration can significantly reduce processing latency, making it suitable for real-time applications that demand rapid response times.

Applications of FPGA-based acceleration:

Machine Learning and Artificial Intelligence: FPGAs have become increasingly popular in accelerating machine learning and AI workloads. FPGA-based AI accelerators offer high-performance inference and training capabilities, enabling faster and more efficient execution of deep learning algorithms.

Data Processing and Analytics: FPGAs can be employed to accelerate data-intensive tasks in database operations and real-time analytics. They enable the rapid processing of large datasets and complex queries, facilitating faster decision-making and insights extraction.

Network Infrastructure and Telecommunications: FPGA-based acceleration plays a crucial role in accelerating network functions, such as packet processing, encryption/decryption, and protocol parsing. FPGAs enhance network performance, improve latency, and enable the implementation of high-bandwidth data processing in telecommunications systems.

Building FPGA-based Accelerators:

Designing an FPGA-based accelerator involves several steps that encompass both hardware and software components.

Algorithm Analysis and Optimization:

- Finding computationally demanding sections of the target method that can be accelerated is the first step.

- These sections frequently exhibit regular patterns and are parallelizable, making them ideal for FPGA implementation.

- To increase parallelism and decrease latency, the algorithm may be optimized using strategies such as loop unrolling, pipelining, and data tiling.

Hardware Design:

- The hardware design step starts after the algorithm has been optimized. This entails developing the hardware design for the FPGA to run the accelerated algorithm.

- To achieve the necessary functionality, the hardware design entails developing modules, connectors, and memory structures.

- To increase productivity and shorten design time, high-level synthesis (HLS) tools can be used to automatically build the hardware design from a high-level programming language, such as C or OpenCL.

Software Development:

- The software used for FPGA-based acceleration is essential for setting up and managing the FPGA.

- Writing the appropriate drivers, interfaces, and control software that communicates with the FPGA is a component of software development.

- This program is in charge of organizing the execution of the accelerated algorithm, controlling data transfers between the host system and the FPGA, and loading the bitstream onto the FPGA.

Hardware-Software Co-Design:

- Hardware-software co-design is crucial to maximizing the performance and efficacy of FPGA-based accelerators.

- This method carefully divides the algorithm between the CPU of the host system and the FPGA.

- A balanced and optimal system can be created by outsourcing computationally demanding elements to the FPGA and using the CPU for control and host-device communication.

Challenges and Considerations:

Designing and implementing FPGA-based accelerators present several challenges and considerations that need to be addressed:

Cost and Resource Constraints:

Hardware components including logic units, memory, and DSP blocks are scarce in FPGAs. The design must be optimized to adhere to these restrictions and still fulfill performance standards. To balance cost, performance, and power usage appropriately, careful resource management and trade-offs are required.

Design Complexity and Verification:

Particularly for larger and more elaborate accelerators, FPGA design can be challenging. To guarantee that the design will perform as intended, rigorous verification techniques are necessary. Hardware emulation, formal verification, and simulation are some of the methods used to test the behavior of the design and identify any potential flaws or faults.

System Integration and Scalability:

A seamless connection with other components is necessary since FPGA-based accelerators are frequently included in bigger systems. Communication interfaces, memory subsystems, and CPUs must all be interfaced with throughout this integration. Another factor to consider is scalability, which enables the accelerator to be easily expanded and modified to meet changing needs.

Conclusion:

An organized process comprising algorithm analysis, hardware design, software development, and hardware-software co-design is needed to create and implement FPGA-based accelerators.

Engineers can produce efficient hardware designs by applying FPGA development tools like Vivado or Quartus Prime and using hardware description languages like Verilog or VHDL. High-level synthesis tools automate the creation of hardware designs from high-level computer languages, which speeds up the procedure even more.

Algorithm optimization, hardware architecture design, and software component development are all required for creating FPGA-based accelerators. Identifying performance-critical areas and implementing strategies like loop unrolling and pipelining are made possible by algorithm analysis and optimization. The goal of hardware design is to efficiently implement the accelerated algorithm by constructing modules, links, and memory structures.

![What is FPGA Introduction to FPGA Basics [2023] computer-chip-dark-background-with-word-intel-it](https://fpgainsights.com/wp-content/uploads/2023/06/computer-chip-dark-background-with-word-intel-it-300x171.jpg)